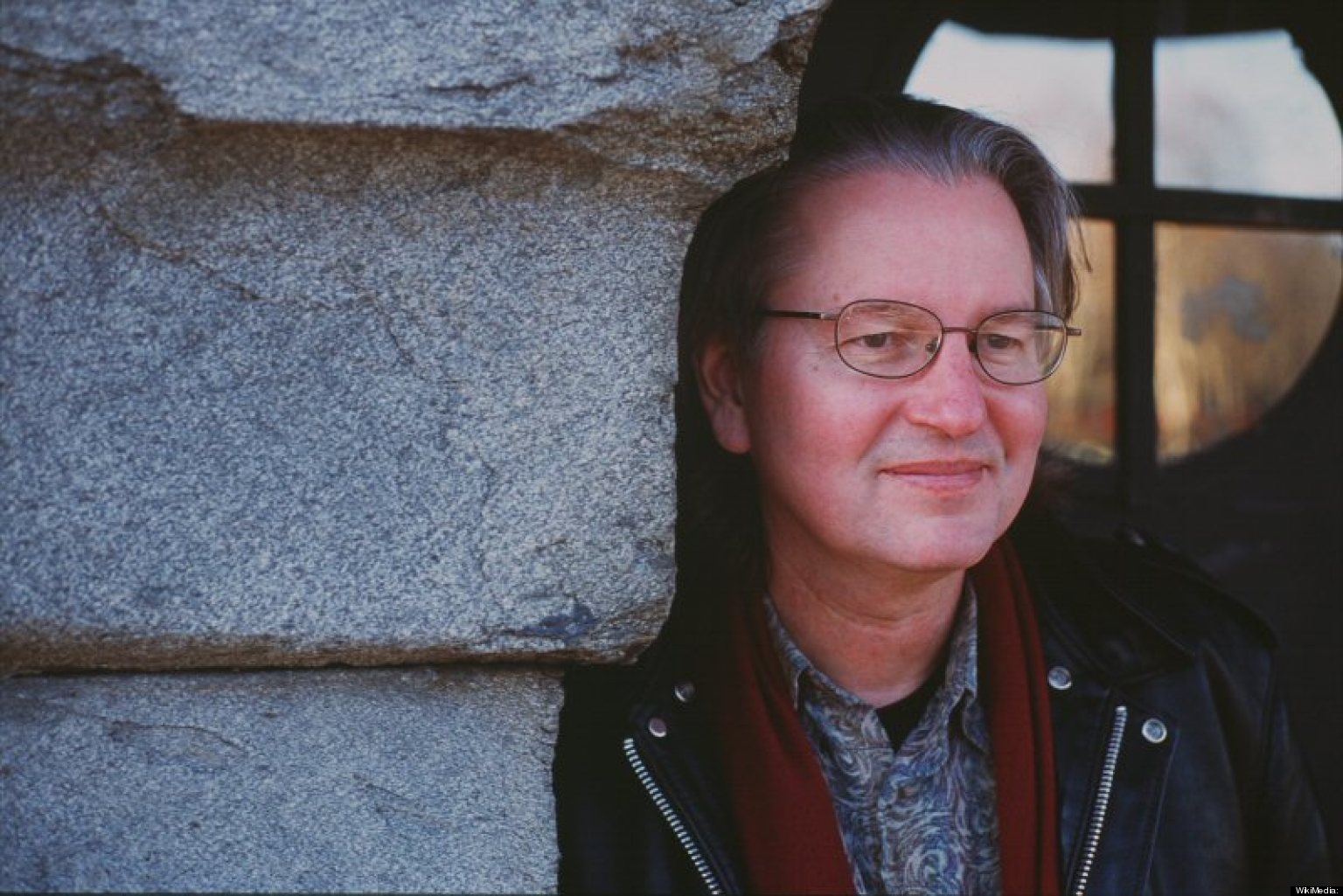

Bruce Sterling is a prominent science fiction writer and a pioneer of the cyberpunk genre. His cyberpunk novels Heavy Weather (1994), Islands in the Net (1988), Schismatrix (1985), The Artificial Kid (1980) earned him the nickname “Chairman Bruce”. Apart from his writings, Bruce Sterling is also a professor of internet studies and science fiction at the European Graduate School. He has contributed to several projects within the scheme of futurist theory, founded an environmental aesthetic movement, edited anthologies and he still continues to write for several magazines including Wired, Discover, Architectural Record and The Atlantic.

In the interview below, we had the honor of hosting Bruce Sterling in our Next Nature Network headquarters to talk to him about the concept of the convergence of humans and machines. Sterling weighs in on the issue with a rather challenging perspective.

Lots of people are actually talking about and also investing a lot of money in this idea of convergence of the machine and humans. What are your thoughts on this?

The result is the unbundling of those metaphysical ideas and their replacement by actual products and services

That convergence will not happen, because the ambition is basically metaphysical. It will recede over the horizon like a heat mirage. We are never going to get there. It works like this: first, far-fetched metaphysical propositions. Then an academic computer scientist will try and build one in the lab. Some aspect of that can actually be commercialized, distributed and sold.

This is the history of artificial intelligence. We do not have Artificial Intelligence today, but we do have other stuff like computer vision systems, robotic abilities to move around, gripper systems. We have bits and pieces of the grand idea, but those pieces are big industries. They do not fit together to form one super thing. Siri can talk, but she cannot grip things. There are machines that grip and manipulate, but they do not talk. You end up with this unbundling of the metaphysical ideas and their replacement by actual products and services. Those products exist in the marketplace like most other artifacts that we have: like potato chips, bags, shoes, Hollywood products, games.

They should be seen in that context, you should not dress these commercial products up, and say “Someday soon we will have the Artificial Intelligence Super Ghost.” I know there are guys in the business who are into that vision, but I do not think that the real captains of the industry, who are making multimillion dollar investments, believe any of that.

Yesterday we had quite an interesting discussion about the future of humanity. This is also related to the concept of artificial intelligence. It is argued that 2025 will be the singularity point, what is your take on that?

You do not want Siri to be more like Alan Turing. You want Siri to be more like Apple. Because Apple owns Siri

There will not be a Singularity. I think that artificial intelligence is a bad metaphor. It is not the right way to talk about what is happening. So, I like to use the terms "cognition" and "computation". Cognition is something that happens in brains, physical, biological brains. Computation is a thing that happens with software strings on electronic tracks that are inscribed out of silicon and put on fibre board.

They are not the same thing, and saying that makes the same mistake as in earlier times, when people said that human thought was like a steam engine. The idea comes from metaphysical problems: Is mathematics thinking? If a machine can do mathematics, is it thinking? If a machine can play chess, is it thinking? There are a lot of things that machines can do, that algorithms can do, that software can do. They have very little to do with cognition. When you try to fit them into the Bonsai box of intelligence, you actually limit the technology. It is as if you are trying to build a cargo aircraft, and you insist that it should also lay eggs because it can fly. It is a flying machine, and it has wings and it flies, and you can even call it a "bird". But aircraft are not actually birds, and the more bird-like you try to make them, the more you get in the way of the potential of the technology.

Why would a 747 flap its wings? Why would it eat? If you consider that the technology is like somehow mystically inspiring flying machines, and they want to be more like an eagle. It is just a bad metaphor and really gets in the way.

An entity like Siri, for instance, is not aspiring to become more human; Siri would want to be many times more efficient than that. Siri does not have one conversation like the conversation we are having here. Siri has hundreds of thousands of conversations at once. It wants to look through more databases faster; it does not want to read its way through a book, quietly pondering, like Alan Turing might have done.

You do not want Siri to be more like Alan Turing, you want Siri to be more like Apple Inc. You want Siri to do everything that Apple can do: Geophysically locate things, run big databases, find apps for you, look for movie locations. Alan Turing does not know every movie in California! You are getting in the way when you say, “Siri, why can’t you be more like a Mid-20th century gay mathematician? So you can pass the Turing Test.” That would be metaphysically pleasing; it would have made Turing’s point. Me in one room, Siri in the other room, and we seem exactly the same; therefore, cognition equals computation. Cognition does not equal computation. You do not even want cognition to equal computation. You are getting in the way of making computation do things that are of genuine interest.

You make this point that cognition and computation should be separate. Why do you think these two separate concepts gets mixed up so much?

They cannot get their heads around the idea that computation is not thinking.

That it is something different.

You are much more like your house cat than you are ever going to be like Siri

They are just taken in by Alan Turing’s mystification. If you are a human being, and you are doing computation, you are trying to multiply 17 times five in your head. It feels like thinking. Machines can multiply, too. They must be thinking. They can do math and you can do math.

But the math you are doing is not really what cognition is about. Cognition is about stuff like seeing, maneuvering, having wants, desires. Your cat has cognition. Cats cannot multiply 17 times five. They have got their own umwelt (environment). But they are mammalian, you are a mammalian. They are actually a class that includes you. You are much more like your house cat than you are ever going to be like Siri. You and Siri converging, you and your house cat can converge a lot more easily. You can take the imaginary technologies that many post-human enthusiasts have talked about, and you could afflict all of them on a cat. Every one of them would work on a cat. The cat is an ideal laboratory animal for all these transitions and convergences that we want to make for human beings.

But we never talk about roboticized cat, an augmented cat, a super intelligent cat. Why? Because we are stuck in this metaphysical trench where we think it is all about humanity's states of mind. It is not! We humans do not always have conscious states of mind: we sleep at night. Computers don't have these behaviors. We are elderly, we forget what is going on. We are young, we do not know how to speak yet. That is cognition. You never see a computer that is so young it cannot speak.

Interview by Menno Grootveld and Koert van Mensvoort.

Edited by Yunus Emre Duyar.

Image via Huffington Post

Share your thoughts and join the technology debate!

Be the first to comment